Mitigating Agent Migrations

How Your Application Can Manage Unexpected Agent Restarts

Agents are not static. At any time, Electric Imp’s impCloud™ load-balancing systems may require that a device’s agent be moved from one server to another. This ‘agent migration’ involves shutting the agent down, transferring its code and any persisted data (recorded using the imp API’s server.save()) to the second host, and then re-instantiating the agent. While this process is taking place, the device will not be able to communicate either with its agent or, by extension, the wider Internet. Remote services be unable to talk to the device.

The good news is that agent migration generally takes place very quickly, and many applications will not need to worry about — or even notice — its occurrence. Electric Imp makes no warranties about the duration of time that an agent may be offline during a migration — typically it could be up to five seconds, for example — and for a small number of applications, any agent outage, however brief, will need to be managed. Also note, in unexpected circumstances when AWS infrastructure fails the downtime can be extended to 20-30 minutes or more in the worst case to allow for detection and recovery, although those situations are typically very infrequent.

Agent Migration Mitigation Code Example

As an example, consider a simple application which uses a set of five WS2812 RGB LEDs to display a colorful, at-a-glance readout of the current and future outdoor temperatures over the next 12 hours. It has been successfully powered on by an end-user and activated. Agent instantiation is almost instantaneous, so when the device has completed its setup routines, it signals its readiness to its agent. The agent now estimates the device’s geographical location and then uses that data to obtain a 12-hour weather forecast from which it determines the current and four future temperature readings. This information is relayed to the device, which uses it to illuminate the five LEDs in suitable colors.

Every 30 minutes, the agent acquires the latest temperature data from an online service and sends the information to the device. It begins this cycle in response to the device’s readiness message. Here is the agent code:

#require "DarkSky.class.nut:1.0.1"

const DURATION = 1800;

local forecast = null;

local locator = null;

local forecastTimer = null;

local location = { "latitude" : 0.0,

"longitude" : 0.0 };

function forecastCallback(error, data) {

if (data) {

local day = "";

local hours = data.hourly.data;

for (local i = 0 ; i < 13 ; i += 3) {

local tempCelsius = (hours[i].temperature.tofloat() - 32.0) * 0.556;

day = day + format("%.1f:", tempCelsius);

}

day = day.slice(0, day.len() - 1);

device.send("temps", day);

}

if (forecastTimer) imp.cancelwakeup(forecastTimer);

forecastTimer = imp.wakeup(DURATION, getForecast);

}

function getForecast() {

forecast.forecastRequest(location.longitude, location.latitude, forecastCallback);

}

// RUNTIME START

// Instantiate Dark Sky API object

forecast = DarkSky("MY_DARK_SKY_API_KEY");

// Watch for the device signalling its readiness

device.on("ready", function(ignoredData) {

getForecast();

});

This works well in most circumstances. Should the device ever go offline for some reason — its end-user power-cycles it, or it suffers a WiFi or broadband outage — the device will eventually come back online and re-signal is readiness, in turn causing the agent to re-locate it and to request an up-to-date local weather forecast.

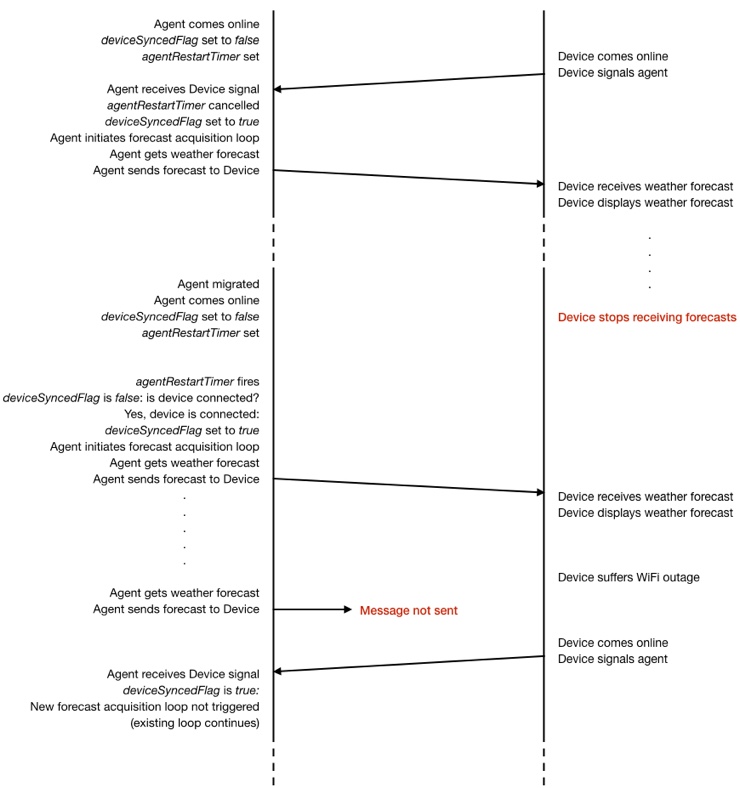

But what if the agent migrates? In this case, when the agent comes back online, it will do nothing until the device signals its readiness — which, since the device has already done so, it will not do again unless it power-cycles. Thanks to the agent migration, the device will receive no more temperature updates.

To remedy this, we need a means for the agent code to determine when it starts up whether this is its first run or a restart.

There are a number of strategies you can employ to deal with this situation. One of the simplest is to set up a timer which fires a short period after the agent has started. If the device signals its readiness before this timer fires, then in all probability both agent and device started together, and the timer can be cancelled. If the timer fires, the code should check whether the device is connected: if it is, then the agent restarted separately from the device, almost certainly because of a migration. All the agent needs to do now is restart the weather forecast update cycle.

Here is how we update our code to deal with these situations:

local agentRestartTimer = null;

local deviceSyncedFlag = false;

function deviceReady(ignoredData) {

if (agentRestartTimer != null) {

imp.cancelwakeup(agentRestartTimer);

agentRestartTimer = null;

}

// If 'deviceSyncedFlag' is already true, we don't need to

// restart the forecasting cycle, otherwise we do

if (!deviceSyncedFlag) getForecast();

deviceSyncedFlag = true;

}

// RUNTIME START

// Watch for the device signalling its readiness

device.on("ready", deviceReady);

// Check for an agent restart

agentRestartTimer = imp.wakeup(30, function() {

agentRestartTimer = null;

if (!deviceSyncedFlag && device.isconnected()) {

deviceReady(true);

}

});

How do the changes work? When the agent and device start for the first time, the value of deviceSyncedFlag is false. When the device quickly signals its readiness, a new function deviceReady() is called and this sets deviceSyncedFlag to true, and begins the cycle of getting forecasts and relaying that data to the device.

Meanwhile, a timer is set to fire 30 seconds after the agent’s start and, if the device has not made contact (ie. deviceSyncedFlag is false), check that the device is connected. If the device is connected, the callback code calls deviceReady() as above. If the device is not online, we can do nothing at this point: the device should check in when it comes back online.

Of course, in the first-run scenario, the device will have signalled its readiness before the agentRestartTimer timer fires, which is why we cancel that timer in deviceReady(). But consider what happens if the agent is migrated by the impCloud and restarts. In this case, the agentRestartTimer timer is not cancelled (because the device, which is still running, doesn’t send a readiness message). The first check in the timer-firing callback — is the value of deviceSyncedFlag false? — passes because this is the default state for the newly instantiated agent. The code now sees if the device is online and connected to the server. Because the device is connected, the code calls deviceReady() to recommence the cycle of getting forecasts and relaying that data to the device, and to set the state flag, deviceSyncedFlag. After a very brief interruption, the device again begins receiving forecast data without having had to re-signal its readiness to do so.

What if the device itself subsequently goes offline? The agent continues to send forecast data as if the device is present, but this information actually never leaves the impCloud because the device is not connected. At some later time, the device restarts and once again signals its readiness. Because we set deviceSyncedFlag to true, this signal does not begin a second, parallel forecast acquisition and relay cycle — instead the device now receives the agent-sent data from the ongoing cycle.

Time diagram showing agent migration mitigation in operation

In short, if the device goes offline for a time, when it comes back up, it continues to receive the data it expects because the agent is already sending it. If the agent goes offline for a moment, when it comes back up, it starts to send data to the device without being prompted to do so.

This technique may be readily adapted for other application scenarios. The essential process is to ensure that whenever the device starts up it informs its agent, and whenever the agent starts up, that it checks whether the device is not only connected but has previously signalled its readiness. Why use a 30-second timer before making this check? To give the device sufficient time to come online, perform its setup tasks and signal the agent. If it fails to do so, we can be sure that it is either online already (ie. the agent is restarting after migration) or is offline at this time.

Agent-to-device Messaging

You should bear in mind that attempts by the agent to send data to the device when it is not accessible will generate an error message ERROR: no handler for device.send(), though this is not an error that will cause the agent to restart. The usual way to debug such errors is to compare all the instances of device.send() in the agent code to ensure they are accompanied by an agent.on(). The first parameter of both calls, a string containing an identifier for the message being sent, must match across the two paired calls. A common source of error is a mis-typed message name string in either of the two calls.

What if you have eliminated such issues as causes of the error? In this case, it may be that you are experiencing a race condition. If, for instance, your agent code calls device.send() as soon as it boots, but the paired agent.on() is near the bottom of your device code, the message can be sent and received before the device’s Squirrel interpreter has been able to register the handler function you assign in the agent.on() call. Because the code hasn’t yet performed this registration when the message arrives, it will throw the above error.

If this is the case, you can apply any of the following techniques:

Register the device’s message handlers as early as possible in the code sequence.

Delay the device.send() call on the agent side to allow the device time to complete its setup. For example:

imp.wakeup(1, function() { // Wait 1 second for the device to boot before // sending it the first message device.send("my.message", data); });Have the device signal its readiness to the agent and ensure that no messages are sent from agent to device until this signal has been received. For example:

device.on("ready", function() { // Device has signalled its readiness - send over saved settings device.send("clock.set.preferences", settingsTable); });

An equivalent error involves a missing handler for agent.send() posted by the agent. Again, this is caused by either a message from the device arriving before its intended handler function has been registered, or the other causes described above.